Two releases of Claude Code published two days apart (v2.1.49 on February 19, v2.1.50 on February 20) bring native worktree management for agents, six memory leak fixes, and new enterprise hooks. GitHub Copilot introduces persistent memory that lets agents retain a repository’s conventions across sessions. ElevenLabs speeds up its Text-to-Speech API by 40% and launches an A/B testing system for voice agents.

Claude Code v2.1.49 and v2.1.50: worktrees, enterprise hooks and freed memory

v2.1.49 — February 19, 2026

February 19, 2026 — Anthropic releases Claude Code v2.1.49, focused on isolating agents in git worktrees and new capabilities for enterprise deployments.

| Feature | Detail |

|---|---|

--worktree / -w flag | Start Claude directly in an isolated git worktree |

isolation: "worktree" for subagents | Subagents run in a dedicated temporary git worktree |

background: true in agent definitions | Force an agent to always run as a background task |

ConfigChange hook event | Triggered when configuration files change — enterprise security auditing |

| SDK model info extended | Fields supportsEffort, supportedEffortLevels, supportsAdaptiveThinking |

| MCP OAuth step-up auth | Progressive auth and discovery caching |

| Ctrl+F | Kill background agents (double press) |

| Sonnet 4.5 1M removed from Max plan | Replaced by Sonnet 4.6 with 1M token context |

The ConfigChange hook addresses an enterprise need: detect in real time any changes to configuration files (CLAUDE.md, settings) for audit and compliance pipelines.

v2.1.50 — February 20, 2026

February 20, 2026 — Claude Code v2.1.50 completes the picture with new CLI commands for agents and a wave of memory leak fixes.

New features:

| Feature | Detail |

|---|---|

WorktreeCreate / WorktreeRemove hooks | Events to set up and tear down a custom VCS when isolating worktrees |

isolation: worktree in agent definitions | Agents declare their own worktree isolation directly in their definition |

claude agents CLI | New command to list all agents configured in the project |

CLAUDE_CODE_DISABLE_1M_CONTEXT | Environment variable to disable the 1M token context if needed |

| Opus 4.6 fast mode + 1M context | Fast mode now includes the full 1M token context window |

startupTimeout for LSP servers | Configure startup timeout for LSP servers |

/extra-usage in VS Code | New command available in VS Code sessions |

CLAUDE_CODE_SIMPLE extended: now disables MCP tools, attachments, hooks and loading of CLAUDE.md — for a truly minimal experience.

6 memory leaks fixed:

| Leak | Component |

|---|---|

| Teammate agent tasks not garbage-collected | Task system |

| Task state objects not removed from AppState | AppState |

| LSP diagnostic data not cleaned up | LSP integration |

| Task output not freed in long-running sessions | Output buffer |

| CircularBuffer items retained after cleanup | CircularBuffer |

| ChildProcess / AbortController retained after shell execution | Shell execution |

Additional fixes: /mcp reconnect no longer freezes the CLI; resumed sessions with resolved symlink paths are now visible; native Linux modules no longer load on glibc < 2.30 (RHEL 8); improved performance in headless mode (-p flag).

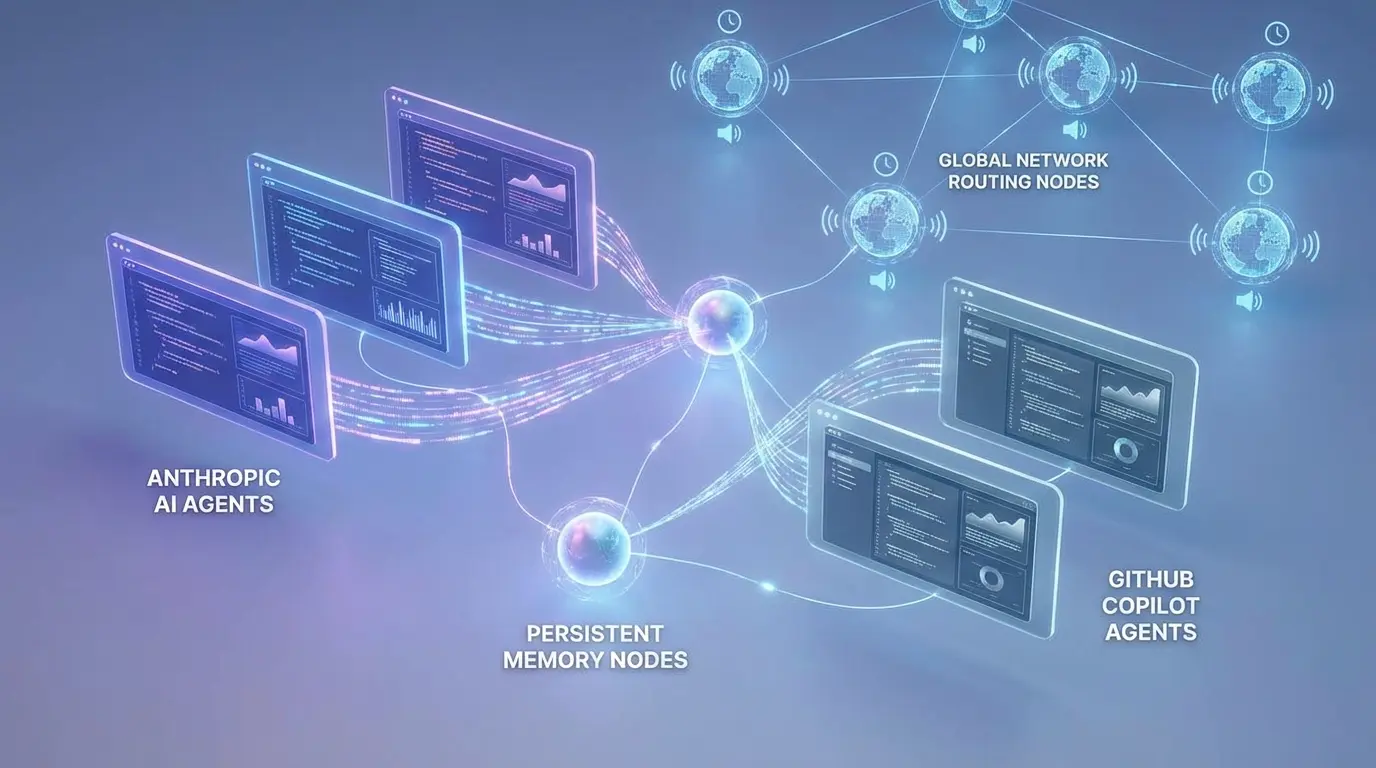

GitHub Copilot: persistent memory of repository conventions

February 22, 2026 — GitHub announces that Copilot now has persistent memory. Agents available on the GitHub platform — native Copilot, Claude, and Codex — can learn a repository’s conventions, style preferences and practices, then remember them in subsequent sessions.

In practice, an agent no longer needs to be reminded every session of naming rules, PR templates or commit conventions for a project — it memorizes them after the first interaction.

The feature is available now in:

- VS Code Insiders

- Copilot CLI

- Copilot coding agent

- Copilot code review

Codex Community Meetups: global program launched

February 20, 2026 — OpenAI launches a program of community meetups dedicated to Codex through its ambassador network. The goal: let developers meet locally to build projects, compare workflows and share usage patterns.

The developers.openai.com/codex/community/meetups page lists 20 upcoming events:

| City | Date |

|---|---|

| Melbourne, Australia | February 26, 2026 |

| Toronto, Canada | February 26, 2026 |

| Singapore | February 28, 2026 |

| Berlin, Germany | March 3, 2026 |

| Warsaw, Poland | March 4, 2026 |

| Orlando, FL, USA | March 12, 2026 |

🔗 Codex Meetups 🔗 Tweet @OpenAIDevs

ElevenLabs: Flash v2.5 40% faster and A/B testing for voice agents

Flash v2.5 — TTS latency reduction

February 20, 2026 — ElevenLabs announces a latency reduction of 20 to 40% for the majority of international developers using the Text-to-Speech API.

| Parameter | Value |

|---|---|

| Latency reduction | -20 to -40% for international developers |

| TTFB (Time to First Byte) | 50ms — unchanged |

| Mechanism | Optimized global network routing |

| Models affected | Flash v2.5 and Turbo |

The 50ms TTFB remains the baseline; the reduction applies to the perceived end-to-end latency thanks to adding a global network routing layer on the existing infrastructure.

Experiments in ElevenAgents — A/B testing voice agents

February 19, 2026 — ElevenLabs introduces “Experiments” in ElevenAgents: an A/B testing system to optimize voice agent performance under real-world conditions.

The workflow is simple: create a variant of an existing agent, route a controlled portion of traffic to that variant, measure impact on key metrics, then promote the winning variant.

Metrics tracked:

- CSAT (customer satisfaction)

- Containment rate (rate of resolution without escalation)

- Conversion

Teams can test varied configurations: prompt structure, workflow logic, voice, personality. The approach enables data-driven improvements rather than intuitive guesses.

Minor updates

1st anniversary Claude Code (Feb 22) — Boris Cherny (@bcherny), product lead for Claude Code at Anthropic, celebrates the tool’s first anniversary with an in-person gathering. Claude Code itself started as a hackathon project. 🔗 Tweet @bcherny

Codex CLI v0.104.0 (Feb 18) — Support for environment variables WS_PROXY / WSS_PROXY for proxying websockets in network proxy environments, notifications when threads are archived/unarchived, and distinct approval IDs for multiple approvals in a single shell execution flow. 🔗 Codex Changelog

Codex App 26.217 (Feb 17) — Drag-and-drop to reorder queued messages, warning when the selected model is downgraded, and improved fuzzy file search. 🔗 Codex Changelog

Copilot CLI: “Make Contribution” skill (Feb 21) — New agent skill for Copilot CLI: it automatically finds a repository’s contribution guidelines and follows them before opening a PR (templates, code conventions, review process). Available open source in the awesome-copilot repo. 🔗 SKILL.md

What this means

The two Claude Code releases published this week (v2.1.49 and v2.1.50) form a coherent whole: Anthropic is building the infrastructure for multi-team agents in isolated environments. The WorktreeCreate/WorktreeRemove hooks let teams integrate their own code management systems (not just git), while fixing the six memory leaks directly addresses long-running autonomous agent sessions — a critical point for production workflows.

GitHub Copilot’s persistent memory changes the value proposition of code agents: from “assistant that answers questions” to “collaborator that knows your project.” The ability to memorize conventions across sessions reduces the main friction for adopting agents in teams with established standards.

ElevenLabs continues to mature its infrastructure for voice agents at scale: the 40% latency reduction and native A/B testing in ElevenAgents are tools product teams need to move from prototype to production deployment.

Sources

- Claude Code CHANGELOG v2.1.49-2.1.50

- Copilot persistent memory — @github

- Codex Community Meetups — @OpenAIDevs

- Codex Meetups — Official page

- ElevenLabs Flash v2.5 + Experiments — @elevenlabsio

- 1st anniversary Claude Code — @bcherny

- Codex CLI 0.104.0 + App 26.217 Changelog

- Copilot “Make Contribution” skill — awesome-copilot

This document was translated from the fr version into the en language using the gpt-5-mini model. For more information on the translation process, see https://gitlab.com/jls42/ai-powered-markdown-translator